How to Find Reliable Data for Web Scraping?

Accuracy, validity, and reliability are among the most important things to pay attention to when looking for data for any reason, even more so when it comes to web scraping. Having top-quality, up-to-date data is crucial for running a business today, but the data’s accuracy and validity are key factors to driving business success.

Digital businesses need accurate and valid data to craft insights and gain business intelligence that allows them to discover new business opportunities, expand into new markets, attract more customers, and beat their competitors.

However, you can’t drive actionable and competitive insights without having reliable and trustworthy data sources. Since everything depends on data today, finding reliable data sources has become quite challenging in the digital business landscape.

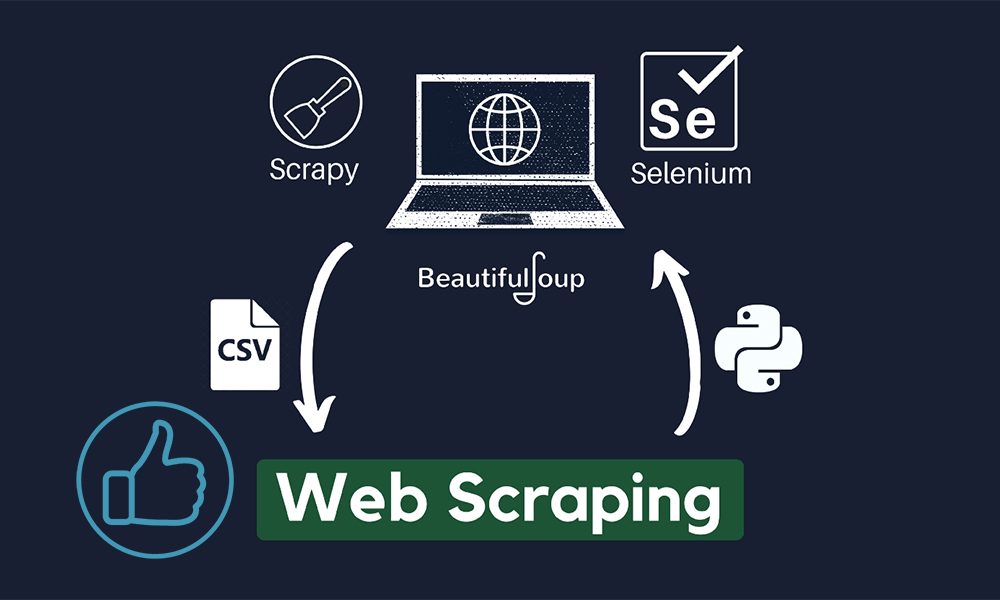

Thankfully, web scraping tools can help target top-quality websites and extract relevant and accurate data according to your needs.

Poor Web Scraping is Equal to No Web Scraping

Reliable data is essential for your business success in the online business realm. It allows brands to beat their competitors, win more of the market share, keep their customers satisfied, etc. It also improves decision-making, allowing businesses to become an authority in their business niche and beyond.

Here are some top reasons why reliable data is essential for driving business success today:

- Reliable data helps improve decision-making, allowing businesses to stay ahead of their competitors and drive innovation in their respective industries.

- With better decisions comes more profit.

- Reliable data gives all the competitive advantages to businesses.

- Reliable and accurate data is the best way to develop better business efficiencies.

- Reliable data helps digital businesses target the right audiences and provide their customers with products and services that can address real-life problems.

Whether you need data to discover better opportunities, attract more customers, beat your competitors, or achieve any other business goal, the information you use must be relevant, accurate, and up-to-date.

Also Read: Web Scraping vs. Web Crawling

Quality of Internet Data

It’s not a secret that data-driven decisions can help a business in many different ways, from improving the overall performance to increasing revenues. While big data can provide actionable insights for achieving your goals, it all comes down to the quality of data you use to accomplish your mission.

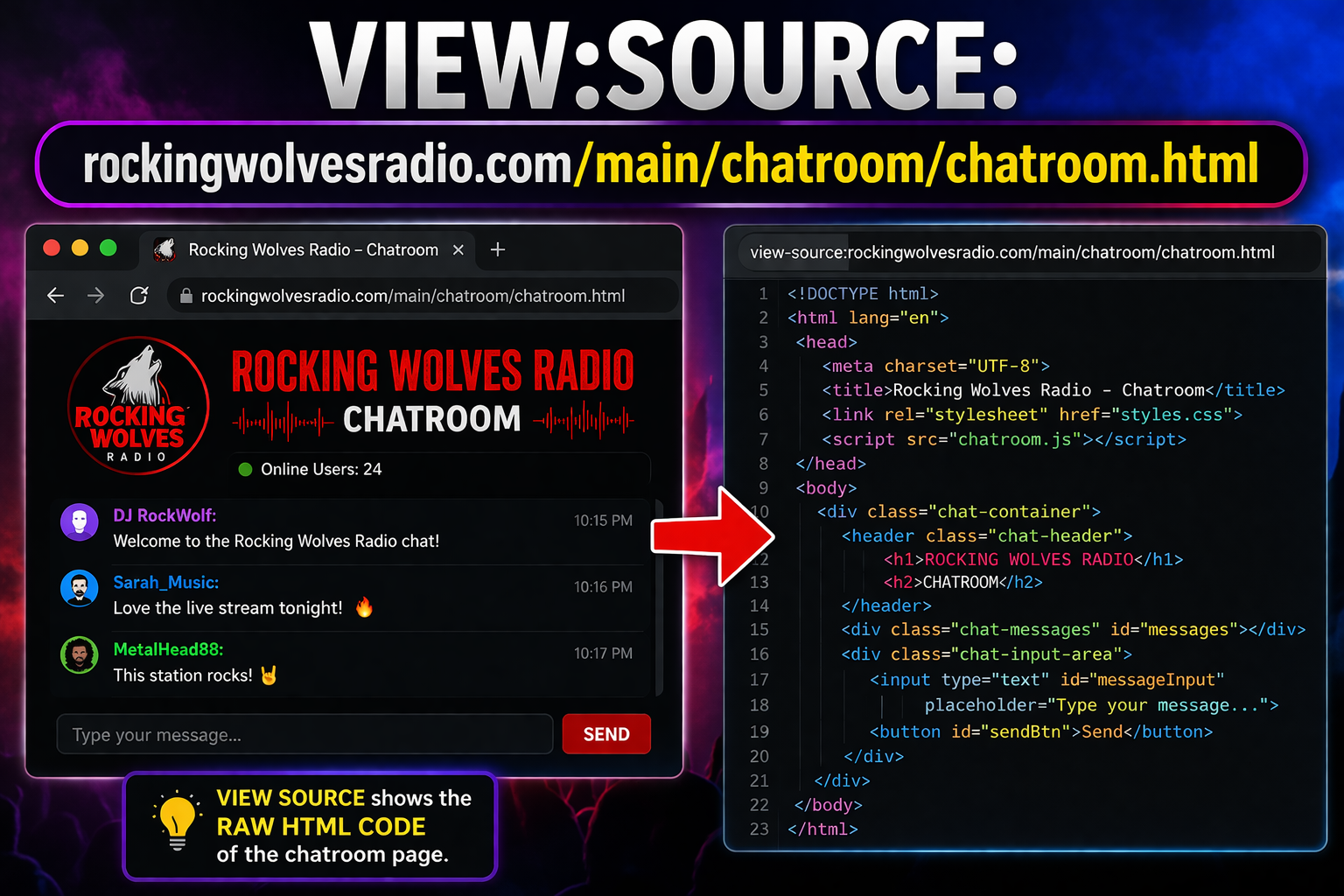

The internet is swarming with billions of websites. Anyone with an internet connection can publish some content on the web. It is up to you to browse through all that low-quality content and discover high-end data sources that can provide trustworthy, up-to-date content that you can benefit from.

Web scraping can help target websites with top data and filter out content to make sure you only extract the data of value by using backconnect proxies to identify top websites with trustworthy data.

Backconnect proxies are servers that utilize a pool of residential proxies to anonymously and securely extract data from target websites. If you are interested to know more, you can read the article here.

Evaluation is Very Important

When you’re looking for top-quality data, the first thing you need to do is to make sure the sources you choose are relevant to your business needs. To do that, you need to evaluate a data source to ascertain if its content holds any real value for your digital business.

Evaluation allows you to ascertain the following things:

- Relevance of data for your business.

- Data accuracy in terms of facts and figures valuable to your organization.

- Data validity ensures the data meets the set criteria and comes in the correct format.

- Data currency allows you to avoid outdated information and use only relevant data.

- Data completeness allows you to find and extract complete and well-structured data that you can turn into actionable insights.

- Data consistency eliminates any differences, variations, and inconsistencies between multiple data versions.

Evaluating before collection is paramount if you want to find top-class data sources. Now, let’s take a look at some of the best ways to discover trustworthy data sources.

Consider Blocks Against Bots

Even though backconnect proxies can help bypass blocking and detecting mechanisms that target website’s use, you should avoid websites and servers that can block scraping bots. Aside from legal implications, getting detected and banned or blocked can result in losing data or extracting useless and incomplete data.

Look at Link Health

If your target website has broken links, you should avoid it. Working links are necessary for allowing your scraping bots to gather more useful and relevant data. Broken links could mess with your scraping process, resulting in getting your bots blocked.

Navigation and Design

Poor web design and navigation are also red flags that tell you something is fishy. If you’re not sure about some data source, you can rely on search engines to check the source before you use it. Search engines rate websites according to the elements such as design, responsiveness, functionality, and searchability – and if a website is ranked high, it can be considered trustworthy.

Data Relevance

Websites ranked at the top of search results are trustworthy enough to contain relevant data. Only scrape relevant websites that contain fresh data. Search engines prefer websites that regularly update their content, so look for such data sources.

Conclusion

The internet is an abundant source of data, but the problem is that most of the available data on the web is low-quality, unstructured, and outdated. If you need relevant data for business needs, you should take the time to carefully inspect every data source before you use it.